Scaling food tech isn’t failing – it’s revealing what was missed

From fermentation bottlenecks to the limits of cost reduction, Robyn Eijlander of NIZO food research reflects on the hidden complexity of scaling food innovation and the growing importance of collaboration, realism, and system thinking

There is a point in every promising technology where confidence gives way to friction. It rarely announces itself. There is no single failure, no dramatic collapse. Instead, the system begins to resist. Assumptions that held at small scale start to loosen. Interdependencies that were invisible in the lab begin to matter. What once felt controlled becomes conditional.

That transition – from proof to pressure – is where scale begins to assert itself.

For Robyn Eijlander, Program & Science Innovation Manager at NIZO food research and the BFF, these moments are not exceptions. They are patterns that repeat often enough to be recognized early, provided you know what to look for.

“Moving fermentation or any other food technology process from lab to pilot to demo scale always comes with challenges, even when many aspects have already been taken into account at an early stage,” she says. “There can be risks or bottlenecks identified along the way at various stages.”

What matters, however, is not the presence of those bottlenecks, but what they reveal. Some are part of normal process development. Others expose a deeper misalignment between what has been optimized and what will ultimately be required.

“When food-grade ingredients or processes have not been taken into account during laboratory development, you can basically start all over with your development phase,” she adds.

It is a blunt outcome, but a useful one. Because it forces a distinction that runs through everything that follows: the difference between something that works, and something that works under real conditions.

Moving fermentation or any other food technology process from lab to pilot to demo scale always comes with challenges, even when many aspects have already been taken into account at an early stage

The early warning signs

That distinction becomes clearer when looking at where problems actually begin. The assumption that scale issues emerge at scale is one of the industry’s more persistent misconceptions.

In practice, the earliest signals appear much sooner, embedded in decisions that feel entirely reasonable at the time.

“Certain ingredients or inducers are used that are very acceptable and convenient at laboratory scale but are very expensive or difficult to get hold of once you start to produce at, for instance, 100,000L scale,” Eijlander explains.

The problem here is not scientific validity. It is translation. Lab logic prioritizes control and speed. Industrial logic prioritizes availability, cost, and repeatability. When those priorities diverge early, the consequences surface later.

Other signals emerge not from what is used, but how processes are structured.

“When manual procedures are structural elements in the process, like manual mixing, those are not scalable,” she notes.

And then there are the omissions that undermine everything downstream.

“When crucial data on the company’s developed process is missing, such as oxygen uptake rate measurements or safety assessments of strains used.”

At that point, the issue is no longer a missing piece of information. It is that the process cannot be understood well enough to be trusted at scale. Without that understanding, every subsequent decision becomes speculative.

Coherence before scale

If early-stage decisions set the trajectory, the processes that succeed are not necessarily the most advanced. They are the most coherent.

“In the end, it’s all about creating a coherent story across biology, process, and economics,” Eijlander remarks.

That coherence is not a byproduct of scaling. It is what enables scaling in the first place.

“When you meet companies that show they have already done a lot of work towards designing a robust process, keeping their end application in mind, we get a lot more excited,” she says.

The difference lies in how constraints are treated. Instead of reacting to them later, they are anticipated early and built into design choices.

In fermentation, that starts with the organism itself.

“You can select a strain that can be manipulated to produce the protein of interest but also choose the one that fits your final process and application best,” she explains.

From there, the logic extends into downstream processing, where early decisions either simplify or complicate everything that follows.

“If the protein is stably secreted by the cells, you do not need a cell breaking step before filtration,” she says.

The result is not a process free of challenges, but one that behaves predictably.

“Being able to show reproducibility and robustness across independent runs or process steps is also a big green flag,” she adds.

Predictability, in this context, turns scale into an engineering problem rather than a guessing game.

When food-grade ingredients or processes have not been taken into account during laboratory development, you can basically start all over with your development phase

Where scale actually breaks

That predictability is precisely what is tested as systems move beyond controlled environments. While scaling is often described as a smooth progression, in reality it introduces entirely new dynamics.

“There needs to be a balance between size or volume and costs,” Eijlander says. “Do not underestimate the complexity of downstream processing when scaling to higher volumes.”

In many cases, production is not where the system fails. It is recovery.

“In the case of food ingredient production by fermentation, many companies underestimate how hard it is to recover product from the ferment, both technically and economically,” she notes.

This is where the economics of scale are decided. Yield may increase, but if recovery becomes inefficient, the system as a whole becomes less viable.

“Even if you can make it bigger, if you cannot recover it economically, what’s the point?”

The same shift appears elsewhere. At larger volumes, entirely new constraints emerge.

“We have seen filtration membrane clogging issues at large scale that are not encountered when processing at lower volumes,” she explains.

Scaling, then, is not simply about size. It is about how systems behave under different conditions and whether those behaviors have been anticipated.

The in-house inflection point

As those conditions become more complex, another tension comes into focus: control versus capability.

Early-stage companies tend to build internally, driven by speed and learning. But there is a point where that approach begins to work against them.

“I think that moving from learning phase to execution phase is a very critical point where such a disadvantage can start to slip in,” Eijlander reflects.

At that stage, the requirement is no longer experimentation, but validation.

“Once you’ve optimized your strain, defined your processes and are ready to demonstrate and validate it, you need data that is reproducible and scalable,” she says.

Generating that data requires infrastructure, but also experience.

“We have seen examples of start-ups asking us if we would be interested in buying their pilot scale product development line, unused and still in the box, because they outgrew it before they were even able to install and use it,” she recalls.

This is less about timing than alignment.

“The lack of expertise and experience in consistent control strategies at scale can be a disadvantage,” she adds.

Which is why the most effective approach is often selective rather than all-or-nothing.

“For example, a company may be very well equipped to optimize upstream in-house, but choose to work together with a CDMO for downstream processing.”

In the case of food ingredient production by fermentation, many companies underestimate how hard it is to recover product from the ferment, both technically and economically

Access as strategy

That selectivity is increasingly shaping how companies think about infrastructure itself. Rather than building everything internally, access-based models are emerging as a strategic alternative. Yet adoption remains uneven. “It varies. I think there is definitely room for improvement,” Eijlander says.

Hesitation often comes down to control, familiarity, and timing. Some teams wait too long before engaging external partners. Where the model is adopted early, the benefits are clearer.

“Vivici is a great example,” she says. “They embraced partnership with NIZO at a very early stage, learning and developing using available lab and pilot equipment, leveraging available expertise and experience.”

.jpg)

The advantage is not just speed. It is alignment. “They have grown and now have their own equipment that they know works for them,” she adds.

Investors play a role here too. “It’s all about de-risking; not only the product and the process from a technical point of view, but also the investments from an economical point of view.”

In that sense, access is not a compromise. It is a deliberate strategy. “Open access upscaling facilities should be seen as an opportunity to enable companies to gain access to professional, food-grade scaling capacity without their own capital-intensive investments.”

Sustainability only works if it aligns with cost

Collaboration as execution

If access defines what is possible, collaboration determines how effectively it is used. And yet, collaboration is often treated as a transaction rather than a capability.

“A scale-up partnership based solely around sending data and protocols and having the CDMO execute and report back is not a good working partnership,” Eijlander says.

That model produces activity, but not progress. “You also want to avoid generating lots of data without any of the data leading to a certain decision,” she adds.

What replaces it is a more demanding form of engagement. “Transparent communication based on trust is key.”

That transparency must go both ways. “If a CDMO just runs what you give them and does not confront you immediately with scale realities, it’s not going to help you further. “And if you refrain from sharing raw data, it’s not going to help you either.”

Success is measured not by output, but by clarity. “When each scale step answers the questions it was supposed to answer and helps to define the next step.”

A scale-up partnership based solely around sending data and protocols and having the CDMO execute and report back is not a good working partnership

Food, not technology

For all the complexity, the objective remains simple. “If you are able to provide something nutritious, affordable and tasty, you’re a winner,” Eijlander says.

The challenge is achieving all three simultaneously. “It’s very rewarding. However, you’re not going to get there with technology only.”

Trade-offs are unavoidable. “If we try to mimic conventional dairy products too much in terms of taste or texture, we see the products lose quality,” she explains.

And cost remains a hard constraint. “If the costs are not low enough, the product simply will not fly.”

Even the most advanced technologies operate within that reality. “As long as there are ultra-cheap agricultural supply chains, technology is not going to win,” she says.

Blending pathways

Which is why the future may not be about replacement, but combination. “Hybrid and blended foods, in the short term, have a lot to offer when approached this way,” Eijlander says.

The logic is pragmatic. Existing systems have strengths. New technologies add capabilities. “There is so much to say for conventional foods, and at the same time there is so much to be gained in terms of sustainability by blending that with other resources,” she explains.

The result is not compromise, but adaptation. “I really think it could be the best of both worlds.”

And crucially, it reflects how products succeed in practice. “Blending plant-based with conventional, cultivated or precision fermented ingredients will enable the development of tasty, affordable and nutritional food products.”

You also want to avoid generating lots of data without any of the data leading to a certain decision

The limits of data

A similar gap exists between potential and practice in how the industry handles data. “I don’t think availability of data is the issue,” Eijlander says.

The challenge lies in interpretation and use. “The main steps still to be taken is to understand what data is good data, and how to actually use it to drive decisions across the chain.”

Within companies, data drives optimization. Across the industry, it remains fragmented. “What about using collective data to benefit the industry as a whole?”

The hesitation is understandable. Data is sensitive, and concerns around misuse or misinterpretation remain.

But the opportunity is significant. “Automation, gathering and using data to predict, adjust and optimize is what will increase chances of success,” she says.

A system that decides

Ultimately, the biggest constraints are not technical. “Sustainability only works if it aligns with cost,” Eijlander says.

And the system reinforces that reality. “The system rewards short-term, low-risk, siloed decisions while talking about long-term, systemic change.”

That tension shapes what reaches the market. “And in the end, it is the consumer that will determine whether an innovative food product becomes a success.”

But that consumer is not fixed. “The variety is huge. So who defines what the consumer wants?”

Planning trajectories

Across all of this, a consistent pattern emerges. Success is not defined by individual breakthroughs, but by how they connect.

“Companies that manage to stay one or more steps ahead each time, planning trajectories rather than steps,” Eijlander says.

That requires seeing the full system. “Being able to see the full chain end-to-end.” And recognizing that scale is not achieved alone. “Companies that are truly able to embrace and structure partnerships will stand a better chance,” she concludes. Because in the end, scale is not just a function of technology. It is a reflection of how well everything else has been understood.

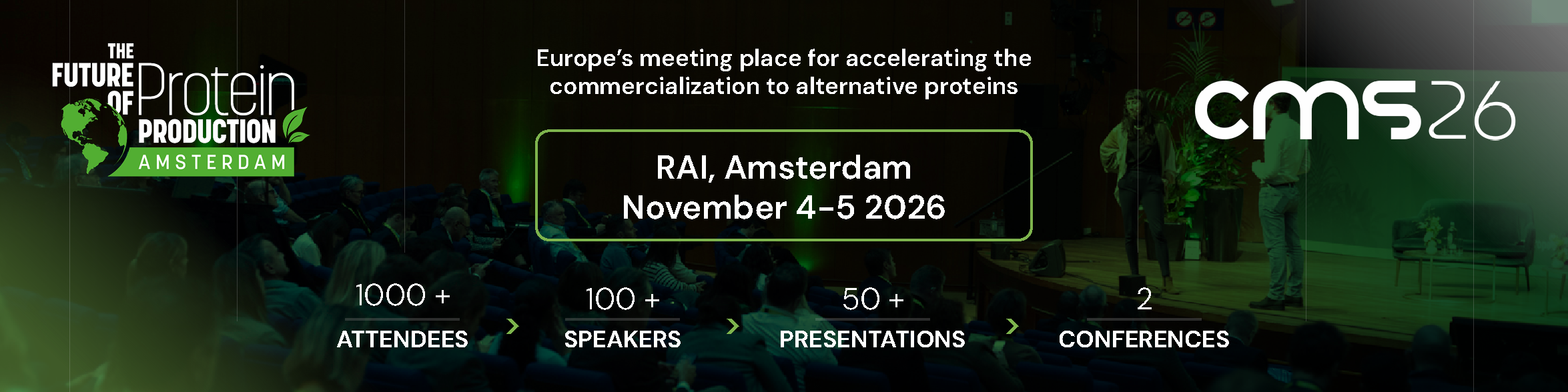

Robyn Eijlander is one of 24 experts shaping the agenda for The Future of Protein Production/Cultured Meat Symposium Amsterdam on 4/5 November 2026, taking place at RAI Amsterdam. To join +100 speakers and more than 750 other attendees, book your conference ticket today and use the code, 'PPTI10', for an extra 10% discount on the current Super Early Bird rate of €995 (closes 29 May 2026). Click here

If you have any questions or would like to get in touch with us, please email info@futureofproteinproduction.com

.png)